Automotive Grade Linux (AGL) is a collaborative open source project that is bringing together automakers, suppliers and

technology companies to accelerate the development and adoption of a fully open software stack for the connected car.

Being a part of speech expert group, Amazon (Alexa Automotive) team intends to collaborate to help define the voice

service APIs in AGL platform.

These are standard set of APIs defined by the AGL Speech EG for users and applications to interact with voice

assistant software of their choice running on the system. The API is flexible and attempts to provide solution

for uses cases that span, running one or multiple voice assistants on the AGL powered car head units at the

same time.

Voice agent is a virtual voice assistant that takes audio utterances from user as input and runs It’s own speech

recognition and natural language processing algorithms on the audio input , generates intents for applications

on the system or for applications in their own cloud to perform user requested actions. This is vendor-supplied

software that needs to comply with a standard set of APIs defined by the AGL Speech EG to run on AGL.

The purpose of this document is to propose the Voice Service and Voice Agent APIs for AGL Speech framework, and

evolve the same into a full blown multi-agent architecture that car OEMs use to enable voice experiences of their choice.

Customer A: As a IVI head unit user, I would like to have the following experiences,

Experience A: With only one active voice agent.

Customer B: As a car OEM,

Customer C: As an AGL Application developer,

Customer D: As a 3rd party Voice Agent Vendor,

Quoting AGL documentation,

http://docs.automotivelinux.org/docs/apis_services/en/dev/reference/signaling/architecture.html#architecture“

“Good practice is often based on modularity with clearly separated components assembled within a common framework. Such modularity ensures separation of duties, robustness, resilience, achievable long term maintenance and security.”

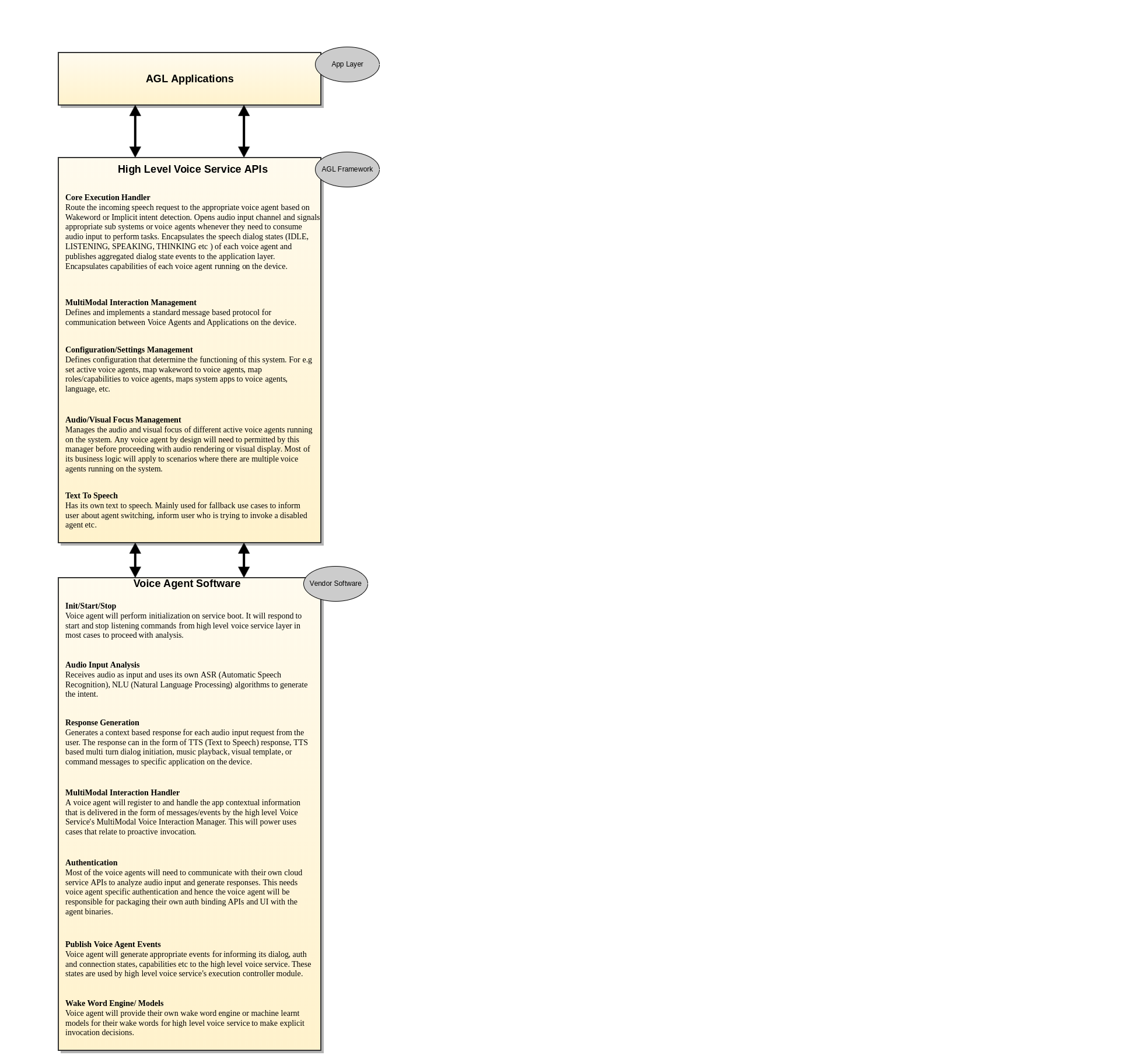

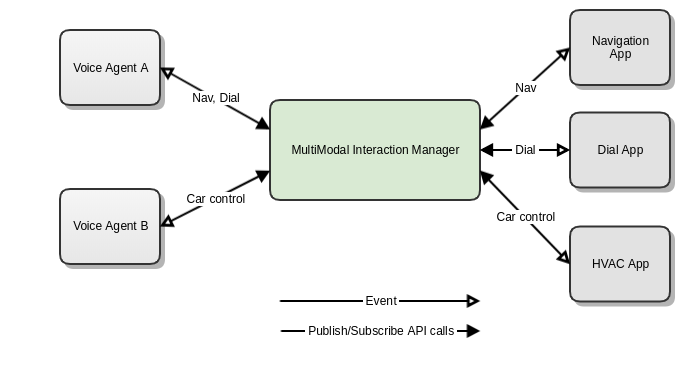

The Voice Services architecture in AGL is layered into two levels. They are High Level Voice Service layer and vendor software layer. In the above architecture, the high-level voice service is composed of multiple bindings APIs (colored in green) that abstract the functioning of all the voice assistants running on the system. The vendor software layer composes of vendor specific voice agent software implementation that complies with the Voice Agent Binding APIs.

Experience A: With only one active voice agent at a time. User selected the active agent.

Assumption: Voice agents are initialized and running, and are discoverable and registered with afbvoiceservice-highlevel. afb-voiceservice-highlevel binding has subscribed to all the events of active voiceagent-binding and vice versa. afb-voiceservice-highlevel is assigned the audio input role by the audio high level binding.

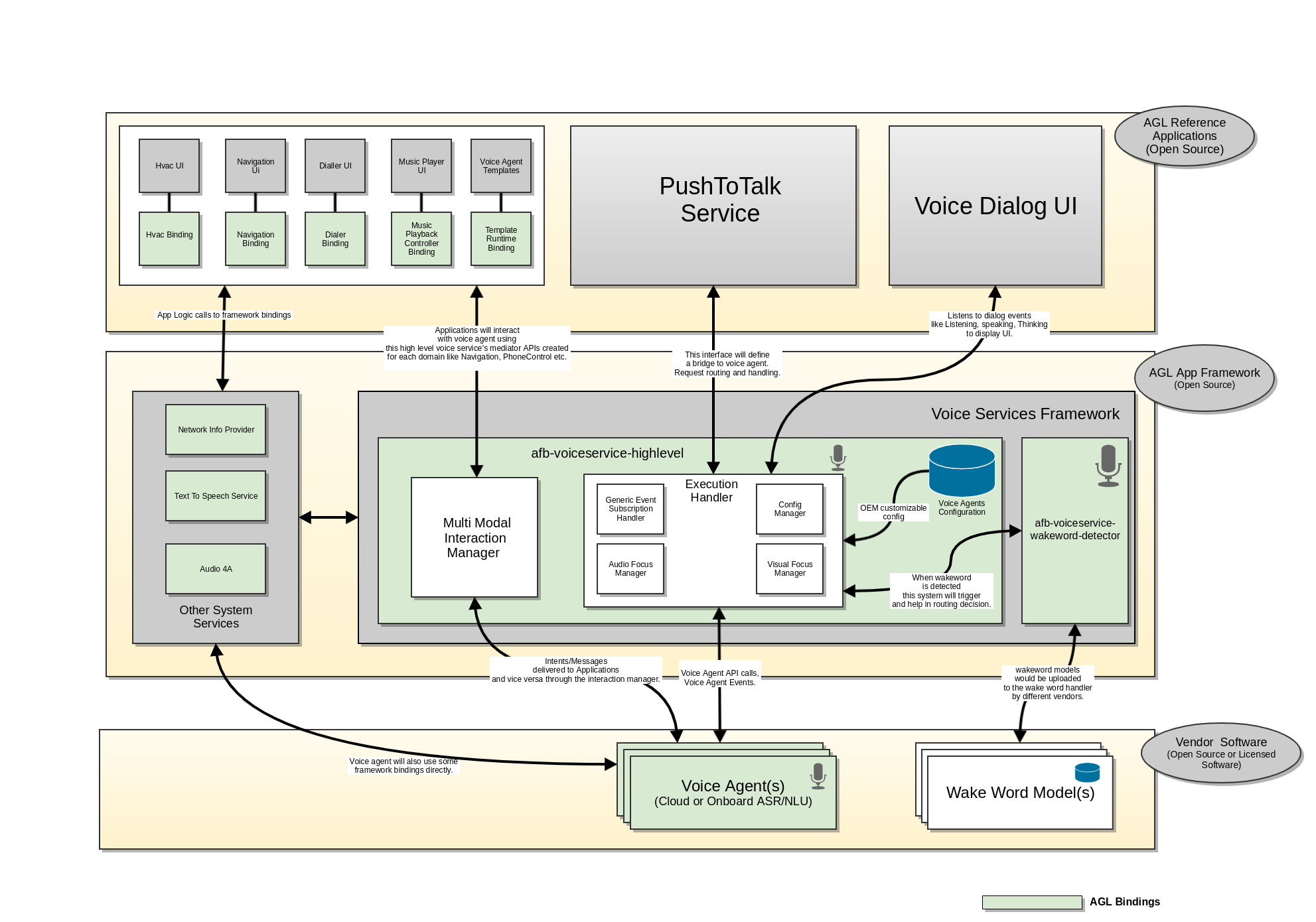

afb-voiceservice-highlevel startListening API will be triggered by Push-To-Talk button invocation.

The Voice Services architecture in AGL is layered into two levels. They are High Level Voice Service component and vendor software components with Voice Agents and Wake Word detection solutions. In the above architecture, the high level voice service is composed of multiple bindings (colored in green) that will be part of the AGL framework. And the vendor software layer composes of voice agent binding and wake word binding that hosts the vendor specific voice assistant software. The system provides flexibility to voice assistant vendors to provide their software as code or binary as long as they abide by the Voice Agent API specifications.

Below is a technical description of each of these binding in both the levels.

High level voice services primarily runs in following two well known modes.

This design makes no assumptions on the mode in which the high level voice service component is configured and running.

This binding has following responsibilities.

The following diagrams dives a litter deeper into the low level components of high level voice service (VSHL)

and their dependencies depicted by directional arrows. The dependency in this case can be either through an

association of objects between components or through an interface implementation relationships.

For e.g.,

A depends on B if A aggregates or composes B.

A depends on B if A implements an interface that is used by B to talk to A.

![]()

![]()

![]()

![]()

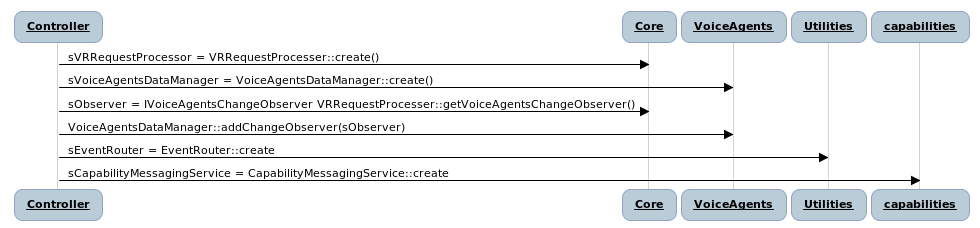

On load the controller will instantiate the entry level classes of each module and inject their dependencies. For e.g Core module observers changes to voiceagent data in VoiceAgent module.

vshl/startListening

Starts listening for speech input. As a part of request, common configuration related information is passed.

Note: The config inputs below are just examples and not the final list of configurations.

Request: {

}

Responses: {

"jtype":"afb-reply",

"request": {

"status":"string" // success or bad-state or bad-request

}

"response":{

"request_id": "string" // Request created by this call.

"agent_id": "string" // Agent to which the request has been proxied.

}

} |

vshl/cancelListening

Cancels the speech recognition processing in the chosen agent.

If agent id is not passed then the cancel request is sent to the default voice agent.

Request:

{

}

Responses:

{

"jtype":"afb-reply",

"request":{

"status":"string" // success or bad-state or bad-request

}

} |

vshl/subscribe

Subscribe/Unsubscribe to voice service high level events.

"permission": "urn:AGL:permission:speech:public:accesscontrol"

Request:

{

{

"type":"array",

"items" : [{

"type":"string" // List of events to subscribe to

}

]

},

{

"subscribe":"boolean"

}

}

Responses:

{

"jtype":"afb-reply",

"request":{

"status":"string" // success or bad-state or bad-request

}

} |

High Level Voice service layer subscribes from similar states from each of the voice agent's and provides an agent agnostic states back to the application layer.

For e.g if Alexa is disconnected from its cloud due to issues and Nuance voice agent is connected, then the connection state would be still reported as CONNECTED back to application layer.

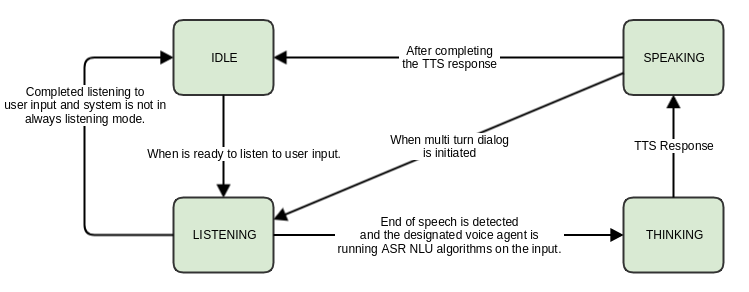

Dialog state describes the state of the currently active voice agent's dialog interaction.

Event Data:

{

"name" : "voice_dialogstate_event"

"state":"string"

"agent_id": "integer"

}

Values for state are

1) IDLE

High level voice service is ready for speech interaction.

2) LISTENING

High level voice service is currently listening.

3) THINKING

A customer request has been completed and no more input is accepted. In this state, Voice service is working on a response.

4) SPEAKING

Responding to a request with speech. |

Connection state describes the state of the voice agent along with errors.

Event Data:

{

"name" : "voice_connectionstate_event"

"state":"string"

"agent_id": "integer"

}

1) DISCONNECTED

Voice agent is not connected to its voice service endpoint.

2) PENDING

Voice agent is attempting to establish connection to its endpoint.

3) CONNECTED

Voice agent is connected to its endpoint.

4) CONNECTION_TIMEDOUT

Voice agent connection attempt failed due to excessive load on its server endpoint.

5) CONNECTION_ERROR

Captures other network related errors. |

Auth state describes the state of the authorization of the voice agent with its cloud endpoint.

Event Data:

{

"name" : "voice_authstate_event"

"state":"string"

"agent_id": "integer"

}

1) UNINITIALIZED

Authorization not yet acquired.

2) REFRESHED

Authorization has been refreshed.

3) EXPIRED

Authorization has expired.

4) ERROR

Authorization error has occurred. |

An important part of the afb-voiceservice-highlevel binding, that acts as a mediator between the voice agents and applications. The mode of communication is through messages and architecturally this binding implements a topic based publisher subscriber pattern. The topics can be mapped to the different capabilities of the voice agents.

{

Topic : "{{STRING}}" // Topic or the type of the message

Action: "{{STRING}}" // The actual action that needs to be performed by the subscriber.

RequestId: "{{STRING}}" // The request ID associated with this message.

Payload: "{{OBJECT}}" // Payload

}

Voice Agent to Applications are downstream messages and Applications to Voice Agent are upstream messages.

Voice agents and applications will have to subscribe to a topic and specific actions within that topic.

vshl/phonecontrol/publish - For publishing phone control messages below.

vshl/phonecontrol/subscribe - For subscribing to phone control messages below.

Upstream

{

Topic : "phonecontrol"

Action : "dial"

Payload : {

"callId": "{{STRING}}", // A unique identifier for the call

"callee": { // The destination of the outgoing call

"details": "{{STRING}}", // Descriptive information about the callee

"defaultAddress": { // The default address to use for calling the callee

"protocol": "{{STRING}}", // The protocol for this address of the callee (e.g. PSTN, SIP, H323, etc.)

"format": "{{STRING}}", // The format for this address of the callee (e.g. E.164, E.163, E.123, DIN5008, etc.)

"value": "{{STRING}}", // The address of the callee.

},

"alternativeAddresses": [{ // An array of alternate addresses for the existing callee

"protocol": "{{STRING}}", // The protocol for this address of the callee (e.g. PSTN, SIP, H323, etc.)

"format": "{{STRING}}", // The format for this address of the callee (e.g. E.164, E.163, E.123, DIN5008, etc.)

"value": {{STRING}}, // The address of the callee.

}]

"required": [ "callId", "callee", "callee.defaultAddress", "address.protocol", "address.value" ]

}

}

Upstream

{

Topic : "phonecontrol"

Action : "call_activated"

Payload : {

"callId": "{{STRING}}", // A unique identifier for the call

"required": [ "callId"]

}

}

{

Topic : "phonecontrol"

Action : "call_failed"

Payload : {

"callId": "{{STRING}}", // A unique identifier for the call

"error": "{{STRING}}", // A unique identifier for the call

"message": "{{STRING}}", // A description of the error

"required": [ "callId", "error"]

}

}

Error codes:

4xx range: Validation failure for the input from the @c dial() directive

500: Internal error on the platform unrelated to the cellular network

503: Error on the platform related to the cellular network

{

Topic : "phonecontrol"

Action : "call_terminated"

Payload : {

"callId": "{{STRING}}", // A unique identifier for the call

"required": [ "callId"]

}

}

{

Topic : "phonecontrol"

Action : "connection_state_changed"

Payload : {

"callId": "{{STRING}}", // A unique identifier for the call

"required": [ "callId"]

}

}

vshl/navigation/publish - For publishing navigation messages.

vshl/navigation/subscribe - For subscribing to navigation messages.

Upstream

{

Topic : "navigation"

Action : "set_destination"

Payload : {

"destination": {

"coordinate": {

"latitudeInDegrees": {{DOUBLE}},

"longitudeInDegrees": {{DOUBLE}}

},

"name": "{{STRING}}",

"singleLineDisplayAddress": "{{STRING}}"

"multipleLineDisplayAddress": "{{STRING}}",

}

}

}

{

Topic : "Navigation"

Action : "cancel_navigation"

}

vshl/guimetadata/publish - For publishing ui metadata messages for rendering.

vshl/guimetadata/subscribe - For subscribing ui metadata messages for rendering.

Upstream

{

Topic : "guimetadata"

Action : "render_template"

Payload : {

<Yet to be standardized>

}

}

{

Topic : "guimetadata"

Action : "clear_template"

Payload : {

<Yet to be standardized>

}

}

{

Topic : "guimetadata"

Action : "render_player_info"

Payload : {

<Yet to be standardized>

}

}

{

Topic : "guimetadata"

Action : "clear_player_info"

Payload : {

<Yet to be standardized>

}

}

vshl/enumerateVoiceAgents

"permission": "urn:AGL:permission:vshl:voiceagents:public"

Enumerates and return an array of voice agents running in the system. This might be need for the applications like settings to be able to present some UI with a list of agents to enable/disable, show status etc.

Request:

{ }

Responses: {

"jtype":"afb-reply",

"request": {

"status":"string" // success or bad-state or bad-request

}

"response": {

"type":"array",

"items" :

[

{

"name":"string",

"description":"string",

"agent_id":"integer" // Voice agent ID

"status":"string" // enabled, disabled

}

]

}

} |

vshl/setDefaultVoiceAgent

Activate or deactivate a voice agent.

"permission": "urn:AGL:permission:vshl:voiceagents:public"

Request:

{

"agent_id":"integer"

"is_active":"boolean"

}

Responses: {

"jtype":"afb-reply",

"request":

{

"status":"string" // success or bad-state or bad-request }

}

} |

Provides an interface primarily for the core afb-voiceservice-highlevel to listen for wakeword detection events and make request routing decisions.

voiceagent/setup

This API is exposed to high level voice service to pass any setup or high level config information like agent_id to the voice agent.

"permission": "urn:AGL:permission:speech:public:accesscontrol"

Request:

{

"agent_id":"integer"

"language":"string"

}

Responses:

{

"jtype":"afb-reply",

"request":{

"status":"string" // success or bad-state or bad-request

}

} |

voiceagent/cancel

Stop the voice agent and its currently running speech recognition processes.

"permission": "urn:AGL:permission:speech:public:accesscontrol"

Request:

{

}

Responses:

{

"jtype":"afb-reply",

"request":{

"status":"string" // success or bad-state or bad-request

}

} |

voiceagent/startListening

Start the listening for speech input. As a part of request, common configuration related information is passed.

Note: The config inputs below are just examples and not the final list of configurations.

"permission": "urn:AGL:permission:speech:public:audiocontrol"

Request:

{

"request_id": "string" // Request ID assigned by the high level voice service.

"language":"string"

"location":"string"

"preferred_network_mode":"string" // online, offline, hybrid

"audio_input_device": "string" // ID of the alsa device to read the input

}

Responses:

{

"jtype":"afb-reply",

"request":{

"status":"string" // success or bad-state or bad-request

}

} |

Voice agent will notify its clients that end of speech is detected.

Event Data:

{

"name" : "voiceagent_endofspeechdetected_event"

"agent_id": "integer"

"request_id": "integer" // the request for which the end of speech is detected

} |

Dialog state describes the state of the currently active voice agent's dialog interaction.

Event Data:

{

"name" : "voiceagent_dialogstate_event"

"state":"string"

"agent_id": "integer"

"request_id": "string" // The request that caused this dialog state transition.

}

Values for state are

1) IDLE

High level voice service is ready for speech interaction.

2) LISTENING

High level voice service is currently listening.

3) THINKING

A customer request has been completed and no more input is accepted. In this state, Voice service is working on a response.

4) SPEAKING

Responding to a request with speech. |

Connection state describes the state of the voice agent along with errors.

Event Data:

{

"name" : "voiceagent_connectionstate_event"

"state":"string"

"agent_id": "integer"

}

1) DISCONNECTED

Voice agent is not connected to its voice service endpoint.

2) PENDING

Voice agent is attempting to establish connection to its endpoint.

3) CONNECTED

Voice agent is connected to its endpoint.

4) CONNECTION_TIMEDOUT

Voice agent connection attempt failed due to excessive load on its server endpoint.

5) CONNECTION_ERROR

Captures other network related errors. |

Auth state describes the state of the authorization of the voice agent with its cloud endpoint if any.

Event Data:

{

"name" : "vshl_authstate_event"

"state":"string"

"agent_id": "integer"

}

1) UNINITIALIZED

Authorization not yet acquired.

2) REFRESHED

Authorization has been refreshed.

3) EXPIRED

Authorization has expired.

4) ERROR

Authorization error has occurred. |

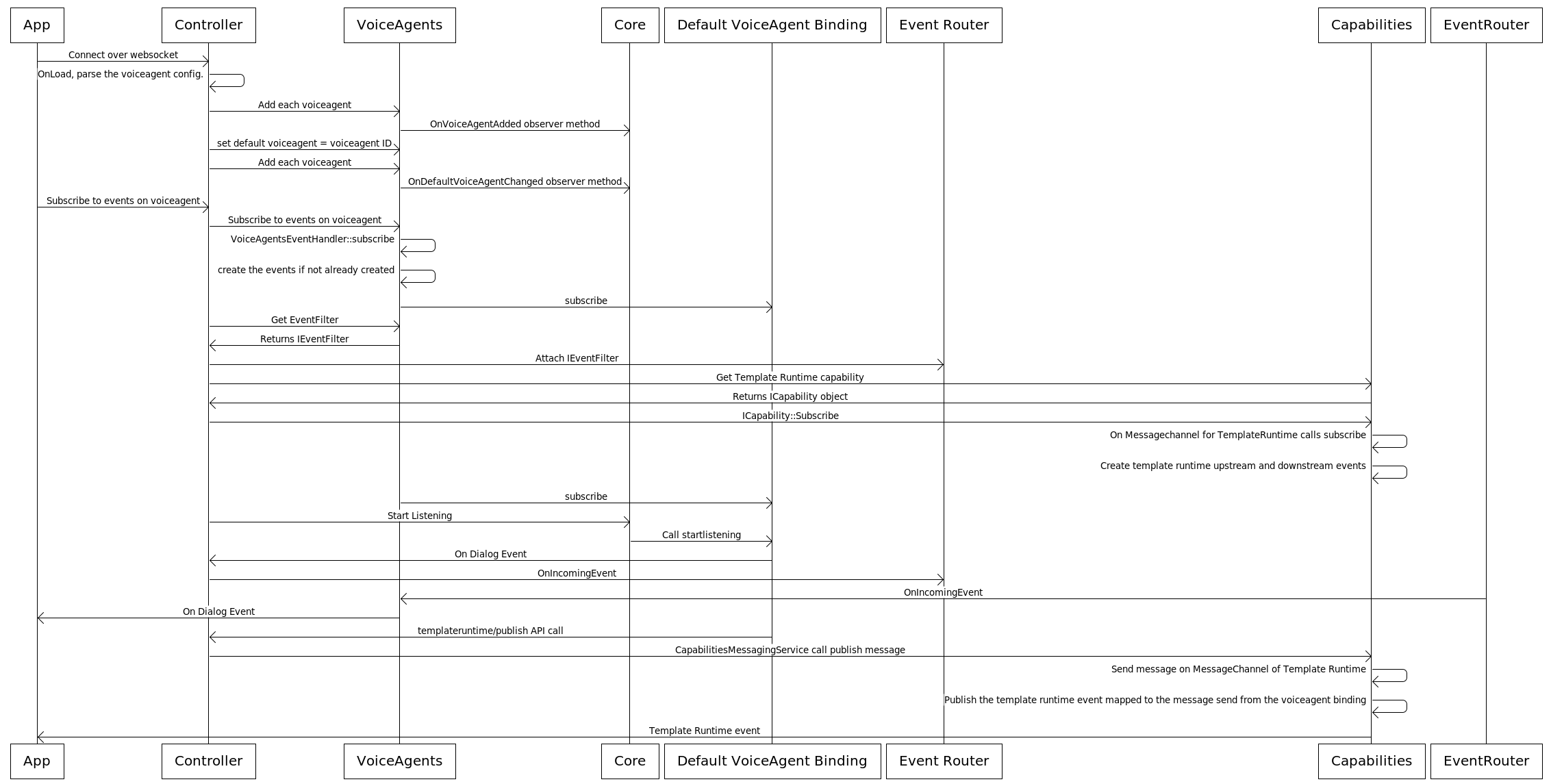

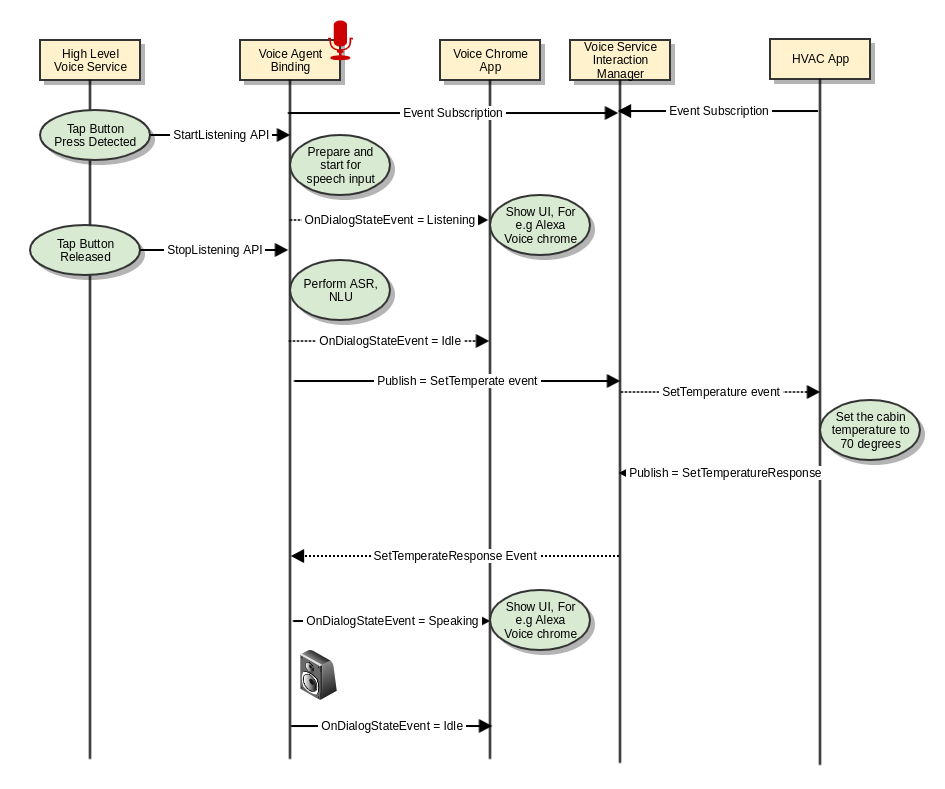

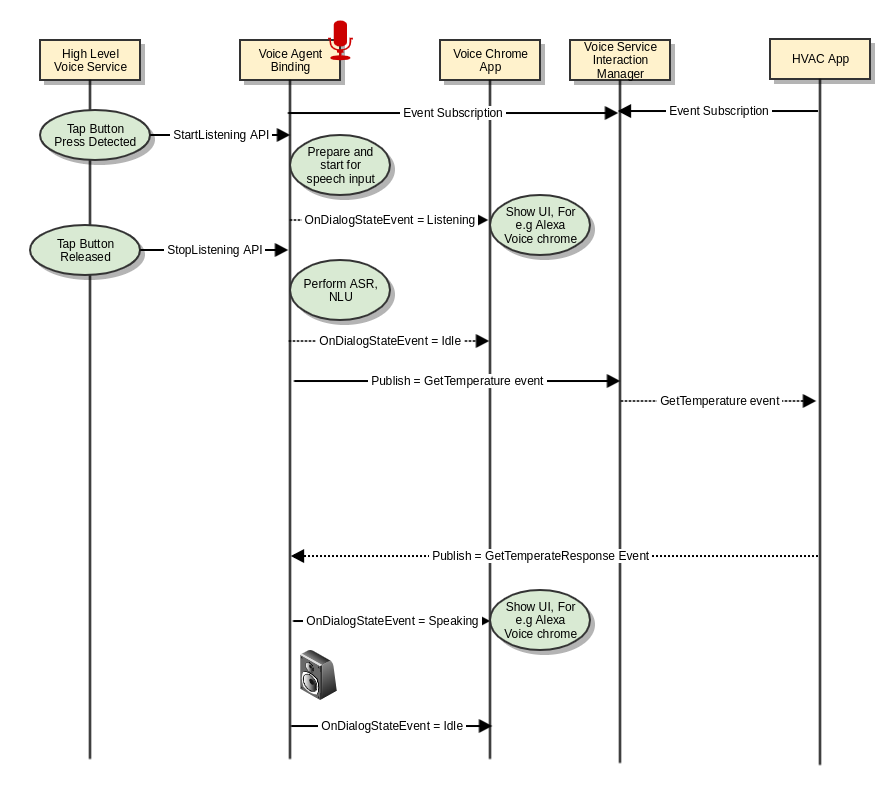

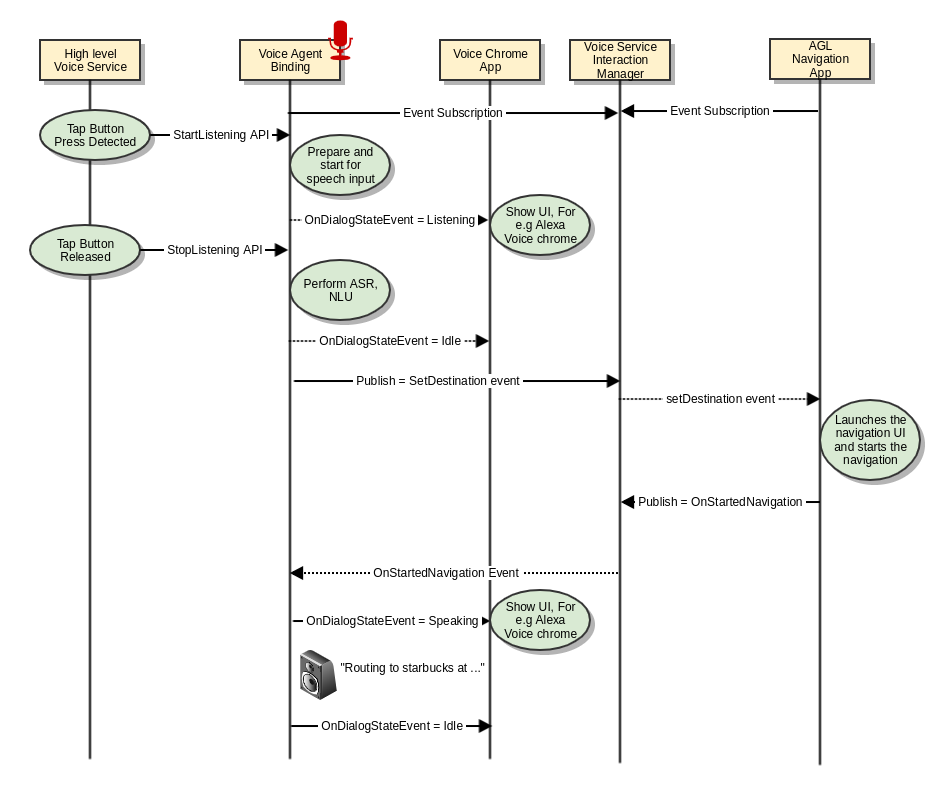

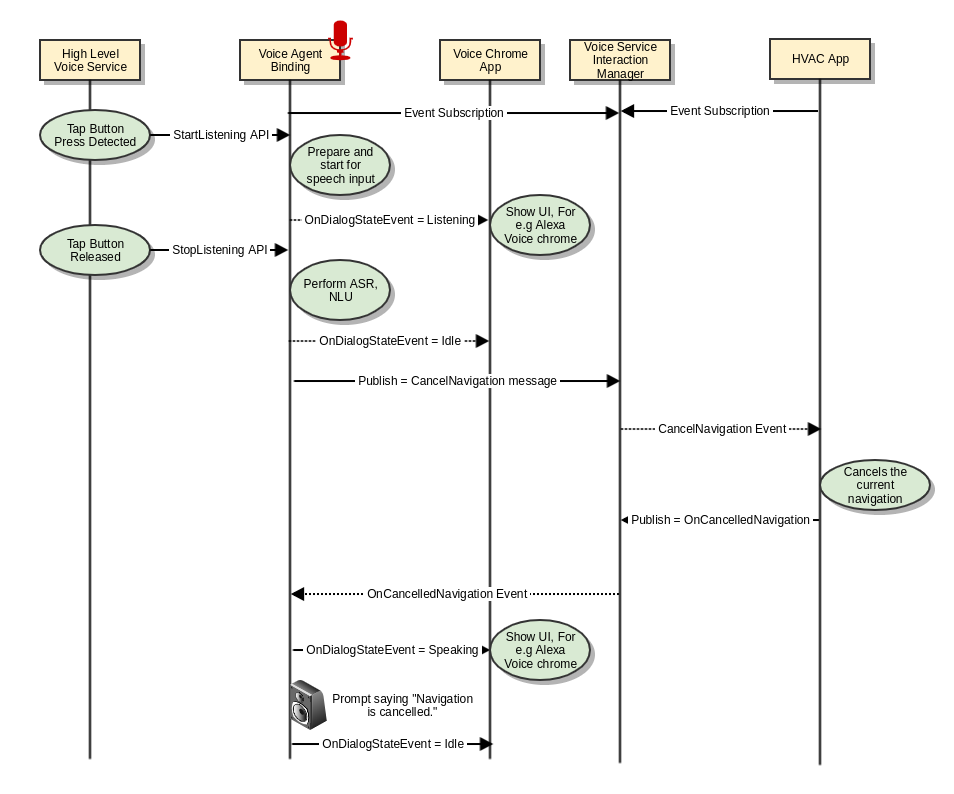

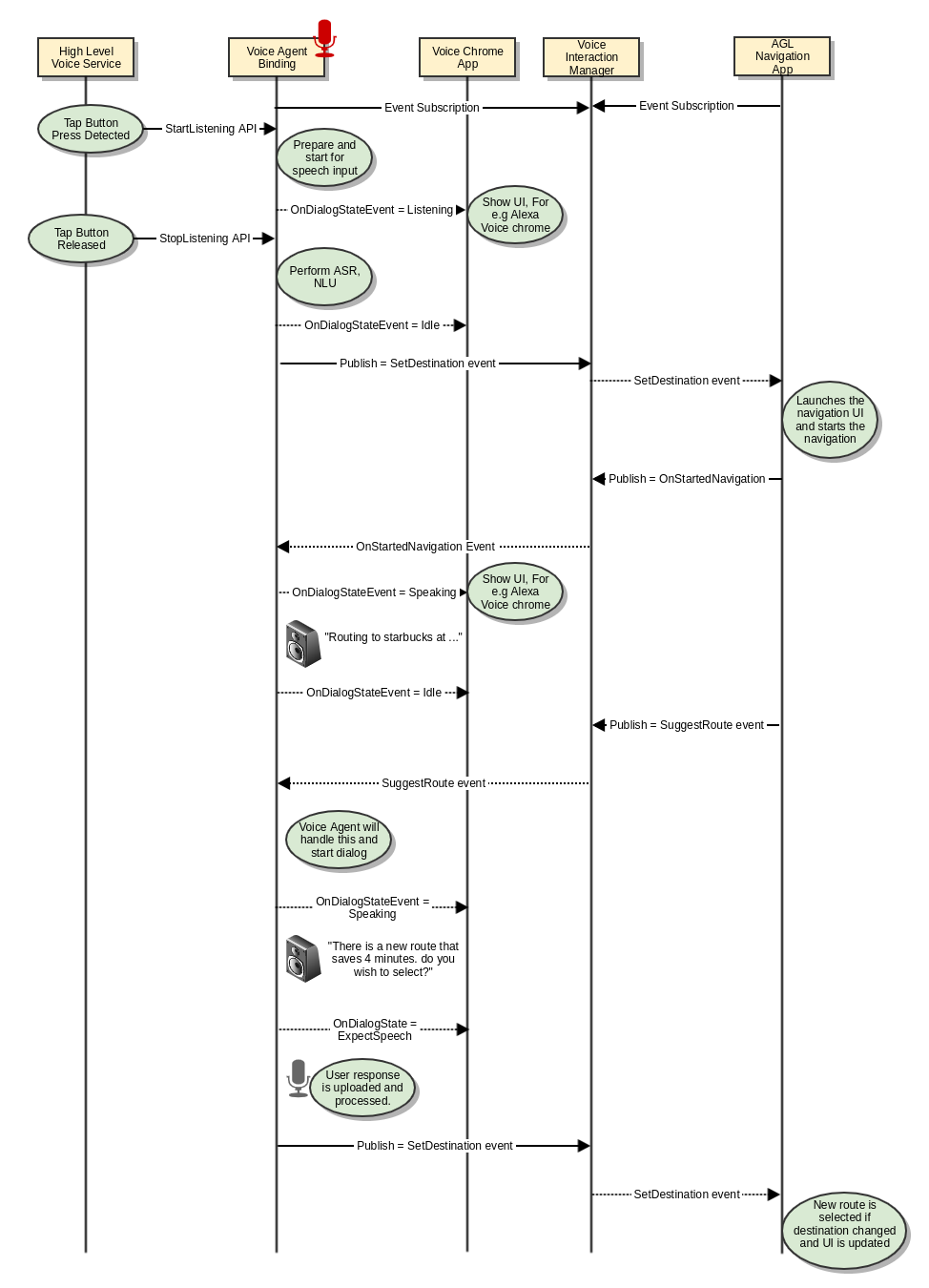

Note: The role of high level voice service binding is not depicted in the below flows for ease of understanding. All the flows are triggered assuming that user chose "hold to talk" to initiate the speech flow. There will slight modifications as explained in the high level workflow above if tap to talk or wake word options are used.

1 | CC - on/off | Turn on or off the climate control (e.g. turn off climate control) |

2 | CC - specific temperature | Set the car's temperature to 70 degrees (e.g. set the temperature to 70) |

3 | CC.- target range | Set the car's heating to a set gradient (e.g. set the heat to high) |

| 4 | CC - min / max temperature | Set the car's temperature to max or min A/C (or heat) (e.g. set the A/C to max) |

| 5 | CC - increase / decrease temperature | Increase or decrease the car's temperature (e.g. increase the temperature) |

| 6 | CC - specific fan speed | Set the fan to a specific value (e.g. set the fan speed to 3) |

| 7 | CC - target range | Set the fan to a specific value (e.g. set the fan speed to high) |

| 8 | CC - min / max fan speed | Set the fan to min / max (e.g. set the fan to max) |

| 9 | CC - increase / decrease fan speed | Increase / decrease the fan speed (e.g. increase the air flow) |

| 10 | CC - Temp Status | What is the current temperature of the car (e.g. how hot is it in my car?) |

| 11 | CC - Fan Status | Determine the fan setting (e.g. what's the fan set to?) |

"Set the cabin temperature to 70 degrees"

"Set the car's temperature to max or min A/C (or heat)

how hot is it in my car?

| 1 | Set Destination | Notify the navigation application to route to specified destination. For e.g "Navigate to nearest star bucks" "Navigate to my home" |

| 2 | Cancel Navigation | Cancel the navigation based on touch input or voice. For e.g User can say "cancel navigation" User can cancel the navigation by interacting with the navigation application directly on the device using Touch inputs. |

| 3 | Suggest Alternate Route | Suggest an alternate route to the user and proceed as per user preference. For e.g. "There is an alternate route available that is 4 minutes faster, Do you wish to select?" When user says "No", then continue navigation when user says "Yes", then proceed with navigation. |

This use case is currently unsupported by Alexa. Its a high level proposal on how the interaction is supposed to work. Alternatively, AGL navigation app can use STT and TTS API (out of scope for this doc) with some minimal NLU to enable similar behavior.

Alexa Demo on Renesas board.